Explorance Blog

Keep up to date with the latest information, tips and tactics about feedback analytics and insights

Featured Articles

Convince Your Manager to Attend Explorance World 2026: Step-by-step Guide and Free Template

Download a justification letter template and get role-specific talking points to secure manager approval for Explorance World 2026 in Boston.

6 min read

Faster, Smarter, More Accessible Feedback Analytics with Explorance Blue 9.6

Explore the latest Blue 9.6 update, featuring faster report generation, full accessibility compliance, improved project management, and enhanced AI-powered feedback analysis

3 min read

Employee Engagement Strategies That Drive Measurable Results in 2026

HR leaders use these employee engagement strategies to reduce turnover, increase productivity, and build feedback loops that produce measurable results across teams.

16 min read

Latest Articles

Redesigning Higher Education for Lifelong Learners: 5 Takeaways from Spinnaker Group

Get Spinnaker Group's 5 takeaways on boosting student engagement, reduce loneliness, and break assumptions with innovative strategies.

8 min read

5 Key Benefits of Organizational Development

Organizational Development can enhance various aspects of the organization, such as its structure, culture, processes, and people.

6 min read

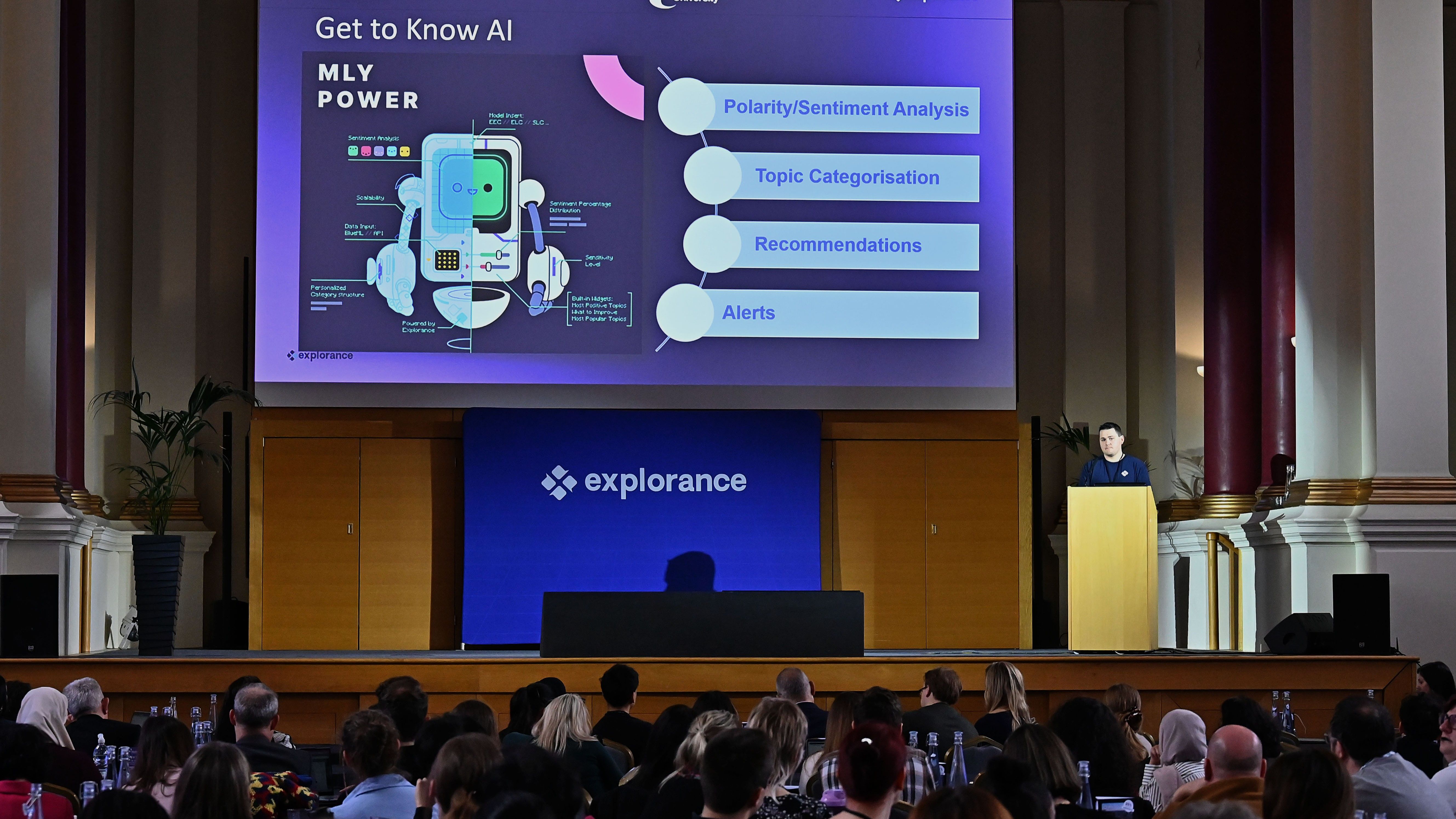

MLY 3.3 Release: Deeper Insights, Shared Intelligence, and Inclusive Access

Discover MLY 3.3, enhancing qualitative feedback analysis with dynamic filters, global custom analysis, WCAG 2.2 AA accessibility, and improved performance for scalable, inclusive insights.

4 min read

Reimagining Student Voice: From Historical Structures to Continuous Dialogue

Legacy feedback structures limit what universities actually hear. Explore how continuous dialogue and analytics can make student voice more meaningful.

7 min read

The Transformation of Student Engagement: From Partnership to Power (NUS Guest Post)

NUS President Amira Campbell explains why students are demanding accountability over feedback and what that shift means for higher education institutions.

7 min read

BlueX 2.1: Strengthening Trust, Governance, and Action Across the Feedback Lifecycle

BlueX 2.1 strengthen survey governance, protect respondent confidentiality, organize workspaces, and boost participation with QR code surveys and live reporting widgets.

3 min read

Explorance Blue Is WCAG 2.2 AA Certified: Why That Matters

Explorance Blue is WCAG 2.2 certified, delivering inclusive, barrier-free feedback experiences across institutions and organizations while reducing compliance risk and ensuring every voice is heard

4 min read

5 Highlights From the 2026 Student Voices in Higher Education Conference

Higher education leaders shared strategies for embedding student voice in governance and feedback systems. See key takeaways from the 2026 London event.

7 min read

Convince Your Manager to Attend Explorance World 2026: Step-by-step Guide and Free Template

Download a justification letter template and get role-specific talking points to secure manager approval for Explorance World 2026 in Boston.

6 min read

Connecting Learning to Business Value: Practical Approaches to Measuring Skills

Discover actionable strategies to connect skill development to business outcomes in L&D.

8 min read

Faster, Clearer, and More Self-Sufficient Learning Measurement with Explorance MTM 9.5

Explore what’s new in Explorance MTM 9.5—faster dashboards, smarter qualitative feedback filters, and a new Help Center for more self-sufficient L&D teams.

2 min read

Faster, Smarter, More Accessible Feedback Analytics with Explorance Blue 9.6

Explore the latest Blue 9.6 update, featuring faster report generation, full accessibility compliance, improved project management, and enhanced AI-powered feedback analysis

3 min read

Newsletter

Stay connected with the latest updates and news from Explorance.

Copyright 2026 © Explorance Inc. All rights reserved.